Crossposted from world spirit sock puppet.

I hear that many people believe that the idea of advanced AI threatening human existence was invented by AI CEOs to hype their products. I’ve even been condescendingly informed of this, as if I am the one at risk of naively accepting AI companies’ preferred narratives.

If you are reading this, you are probably familiar enough with the decades-old AI safety community to know this isn’t true. But I don’t have a good direct way to reach the people who could use this information, and still I hate to leave such a falsehood uncontested. So if this is obvious, I hope the post is still perhaps useful to point more distant and confused people toward.

~

I personally know that AI risk was not invented by the tech CEOs because I have been near the middle of it since at least 2009—before any of the prominent AI companies existed, let alone had CEOs who might be trying to hype their products.

Here’s are some miscellaneous events over the years to give you a sense of the implausibility of this:

- 2008 – I attempt to contact Eliezer Yudkowsky to inform him that I am ‘trying to figure out the optimal way to use my life’ and would like to hear a better account of why his plan (of worrying about AI risk) is good. I have read about it online, but would like a clearer account. Traveling the world shortly after undergrad later, I meet a handful of people in person in the Bay Area who care about this, and one argues strongly that I should prioritize AI risk over my previously preferred causes e.g. climate change. I decide to think about this.

- 2009 – I am still not very convinced that AI is the most important thing to work on, but go to stay with the people who are worried about it for a few months. I argue about it a lot with a handful of them. There seem to be about twenty of them locally in the South Bay, though many more who comment on the relevant blogs. My photography collection from this era is quite sparse.

I go to The Singularity Summit for my first time (and its fourth), which is very lively and full of people who are thinking seriously about the future of AI. - 2010 – Deepmind is founded. (I am back at school.)

- 2011 – I start a philosophy PhD at CMU, hoping to be eligible to work at somewhere like the Future of Humanity Institute one day, which is a happening hub of discussion about existential risk, AI and other important issues, that I like to visit.

- 2012 – I visit the Bay more and hang out with the growing AI risk community there. I visit the UK and do the same. I go to the AGI 2012 Winter Intelligence Conference.

- 2013 – I move to Berkeley and work at MIRI for a semester during grad school. I measure algorithmic progress over time across various computer science domains, as input to expectations for artificial intelligence in future. I visit the UK and attend the Center for Effective Altruism’s ‘weekend away’ where we have a debate on which cause is best, between global poverty, animal welfare and extinction risk. Extinction risk wins—the crowd leaves having changed their mind in that direction on net. The three advocates just before or after:

- 2014 – I join MIRI properly. I research The Asilomar Conference and Leó Szilárd as evidence about whether it is worth people trying to deal with risks early, because people around mostly believe that the risks from AI are at least a decade away, and there is disagreement about whether that makes it futile. I run an online reading group about Superintelligence, a new book about AI risk. I co-found AI Impacts, a project to answer questions about the future of AI, because AI risk seems at least fairly plausibly the most important thing to work on, and I want to investigate more and share my thinking with others.

- 2015 – I attend the first FLI conference—it seems that more people and more prominent people are interested in AI safety! OpenAI is founded.

- 2016 – I lead a team to run the first Expert Survey on Progress in AI. The median probability given to an outcome of advanced AI that is “Extremely Bad (e.g., human extinction)” is already 5%.

- 2017 – Some people around me are getting very worried, and saying AGI will happen within several years. My survey gets a shocking amount of media attention, becoming the ‘16th most discussed paper’ in 2017 according to Altmetric. Apparently there is interest in this topic..

- 2018 – I go to a big workshop for people working on AI risk in the English countryside, and a Chilean summit where I talk on TV and the radio about AI risk. It feels like interest is still picking up, and I feel optimistic about talking to the public.

- 2019 – GPT-2 comes out. Someone tries to get it to name our house. My favorite names include things like “World peace: tigers and humans” and “rooftop hillside: the highest place in the world”. It is hilarious and useless, but also magical and wild. The things we have worried about for years are feeling more tangible, and people’s ‘AI timelines’ are shrinking.

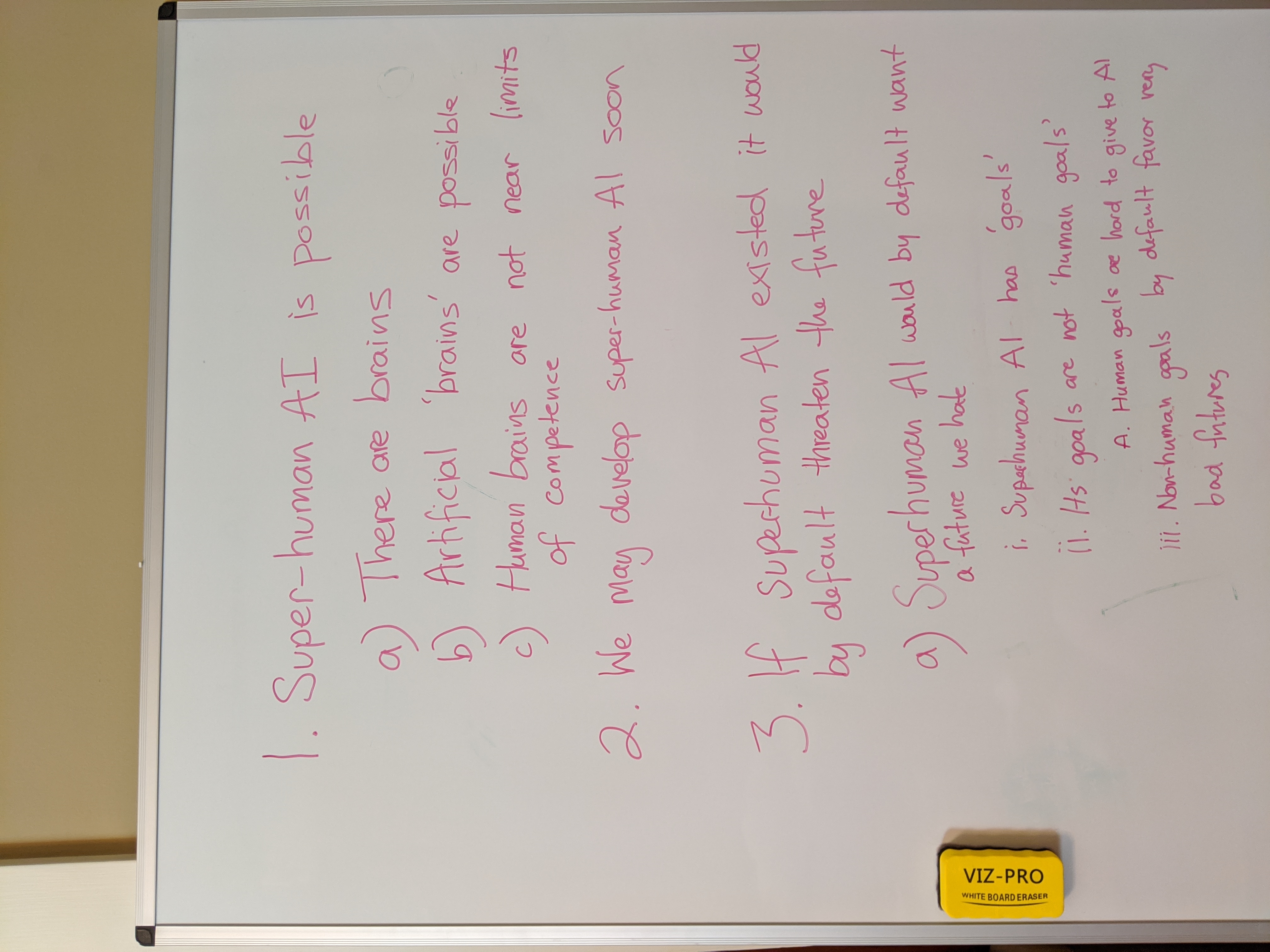

- 2020 – The world is reminded that really crazy things can happen. AI Impacts becomes remote. I spend the year with my household, who are almost all working on AI risk. We enjoy whiteboards a lot and run at least one good house conference in this period.

- 2021 – Anthropic is founded