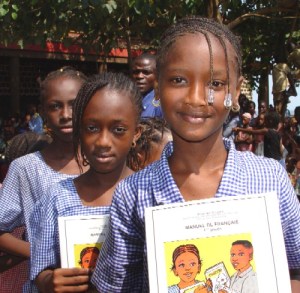

People can be hard to tell apart, even to themselves (picture: Giustino)

Humans make mental models of other humans automatically, and appear to get somewhat confused about who is who at times. This happens with knowledge, actions, attention and feelings:

Just having another person visible hinders your ability to say what you can see from where you stand, though considering a non-human perspective does not:

[The] participants were also significantly slower in verifying their own perspective when the avatar’s perspective was incongruent. In Experiment 2, we found that the avatar’s perspective intrusion effect persisted even when participants had to repeatedly verify their own perspective within the same block. In Experiment 3, we replaced the avatar by a bicolor stick …[and then] the congruency of the local space did not influence participants’ response time when they verified the number of circles presented in the global space.

Believing you see a person moving can impede you in moving differently, similar to rubbing your tummy while patting your head, but if you believe the same visual stimulus is not caused by a person, there is no interference:

[A] dot display followed either a biologically plausible or implausible velocity profile. Interference effects due to dot observation were present for both biological and nonbiological velocity profiles when the participants were informed that they were observing prerecorded human movement and were absent when the dot motion was described as computer generated…

Doing a task where the cues to act may be incongruent with the actions (a red pointer signals that you should press the left button, whether the pointer points left or right, and a green pointer signals right), the incongruent signals take longer to respond to than the congruent ones. This stops when you only have to look after one of the buttons. But if someone else picks up the other button, it becomes harder once again to do incongruent actions:

The identical task was performed alone and alongside another participant. There was a spatial compatibility effect in the group setting only. It was similar to the effect obtained when one person took care of both responses. This result suggests that one’s own actions and others’ actions are represented in a functionally equivalent way.

You can learn to subconsciously fear a stimulus by seeing the stimulus and feeling pain, but not by being told about it. However seeing the stimulus and watching someone react to pain, works like feeling it yourself:

In the Pavlovian group, the CS1 was paired with a mild shock, whereas the observational-learning group learned through observing the emotional expression of a confederate receiving shocks paired with the CS1. The instructed-learning group was told that the CS1 predicted a shock…As in previous studies, participants also displayed a significant learning response to masked [too fast to be consciously perceived] stimuli following Pavlovian conditioning. However, whereas the observational-learning group also showed this effect, the instructed-learning group did not.

A good summary of all this, Implicit and Explicit Processes in Social Cognition, interprets that we are subconsciously nice:

Many studies show that implicit processes facilitate

the sharing of knowledge, feelings, and actions, and hence, perhaps surprisingly, serve altruism rather

than selfishness. On the other hand, higher-level conscious processes are as likely to be selfish as prosocial.

…implicit processes facilitate the sharing of knowledge, feelings, and actions, and hence, perhaps surprisingly, serve altruism rather than selfishness. On the other hand, higher-level conscious processes are as likely to be selfish as prosocial.

It’s true that these unconscious behaviours can help us cooperate, but it seems they are no more ‘altruistic’ than the two-faced conscious processes the authors cite as evidence for conscious selfishness. Our subconsciouses are like the rest of us; adeptly ‘altruistic’ when it benefits them, such as when watched. For an example of how well designed we are in this regard consider the automatic empathic expression of pain we make upon seeing someone hurt. When we aren’t being watched, feeling other people’s pain goes out the window:

…A 2-part experiment with 50 university students tested the hypothesis that motor mimicry is instead an interpersonal event, a nonverbal communication intended to be seen by the other….The victim of an apparently painful injury was either increasingly or decreasingly available for eye contact with the observer. Microanalysis showed that the pattern and timing of the observer’s motor mimicry were significantly affected by the visual availability of the victim.