Is anyone really altruistic? The usual cynical explanations for seemingly altruistic behavior are that it makes one feel good, it makes one look good, and it brings other rewards later. These factors are usually present, but how much do they contribute to motivation?

One way to tell if it’s all about altruism is to invite charity that explicitly won’t benefit anyone. Curious economists asked their guinea pigs for donations to a variety of causes, warning them:

“The amount contributed by the proctor to your selected charity WILL be reduced by however much you pass to your selected charity. Your selected charity will receive neither more nor less than $10.”

Many participants chipped in nonetheless:

We find that participants, on average, donated 20% of their endowments and that approximately 57% of the participants made a donation.

This is compared to giving an average of 30-49% in experiments where donating benefited the cause, but it is of course possible that knowing you are helping offers more of a warm glow. It looks like at least half of giving isn’t altruistic at all, unless the participants were interested in the wellbeing of the experimenters’ funds.

The opportunity to be observed by others also influences how much we donate, and we are duly rewarded with reputation:

Here we demonstrate that more subjects were willing to give assistance to unfamiliar people in need if they could make their charity offers in the presence of their group mates than in a situation where the offers remained concealed from others. In return, those who were willing to participate in a particular charitable activity received significantly higher scores than others on scales measuring sympathy and trustworthiness.

This doesn’t tell us whether real altruism exists though. Maybe there are just a few truly altruistic deeds out there? What would a credibly altruistic act look like?

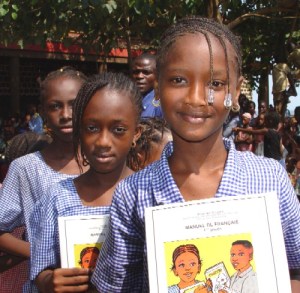

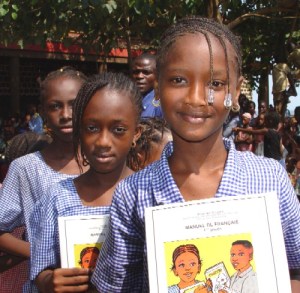

Fortunately for cute children desirous of socially admirable help, much charity is not driven by altruism (picture: Laura Lartigue)

If an act made the doer feel bad, look bad to others, and endure material cost, while helping someone else, we would probably be satisfied that it was altruistic. For instance if a person killed their much loved grandmother to steal her money to donate to a charity they believed would increase the birth rate somewhere far away, at much risk to themselves, it would seem to escape the usual criticisms. And there is no way you would want to be friends with them.

So why would anyone tell you if they had good evidence they had been altruistic? The more credible evidence should look particularly bad. And if they were keen to tell you about it anyway, you would have to wonder whether it was for show after all. This makes it hard for an altruist to credibly inform anyone that they were altruistic. On the other hand the non-altruistic should be looking for any excuse to publicize their good deeds. This means the good deeds you hear about should be very biased toward the non-altruistic. Even if altruism were all over the place it should be hard to find. But it’s not, is it?